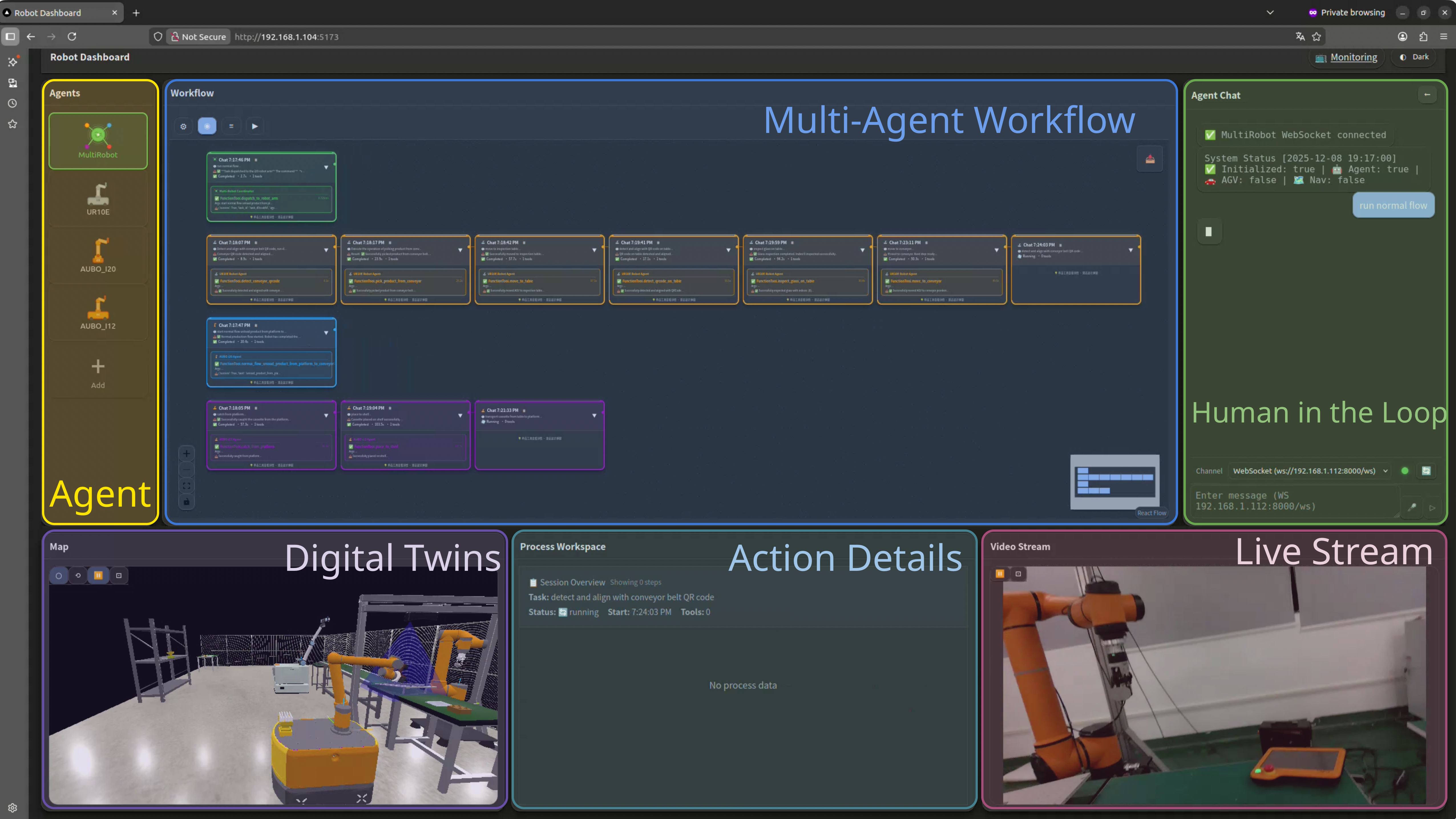

You can see each agent's role in the log.

Planner, perception, navigation, manipulation, and supervisor each show up in the trace instead of being hidden behind one controller.

Embodied Agentic Runtime is the layer that brings AI systems out of the lab and into real environments, where they can act, learn from what happens, and generate the data needed to improve over time.

One language request turns into planning, perception, manipulation, and verification steps in a live environment.

Planner, perception, navigation, manipulation, and supervisor each show up in the trace instead of being hidden behind one controller.

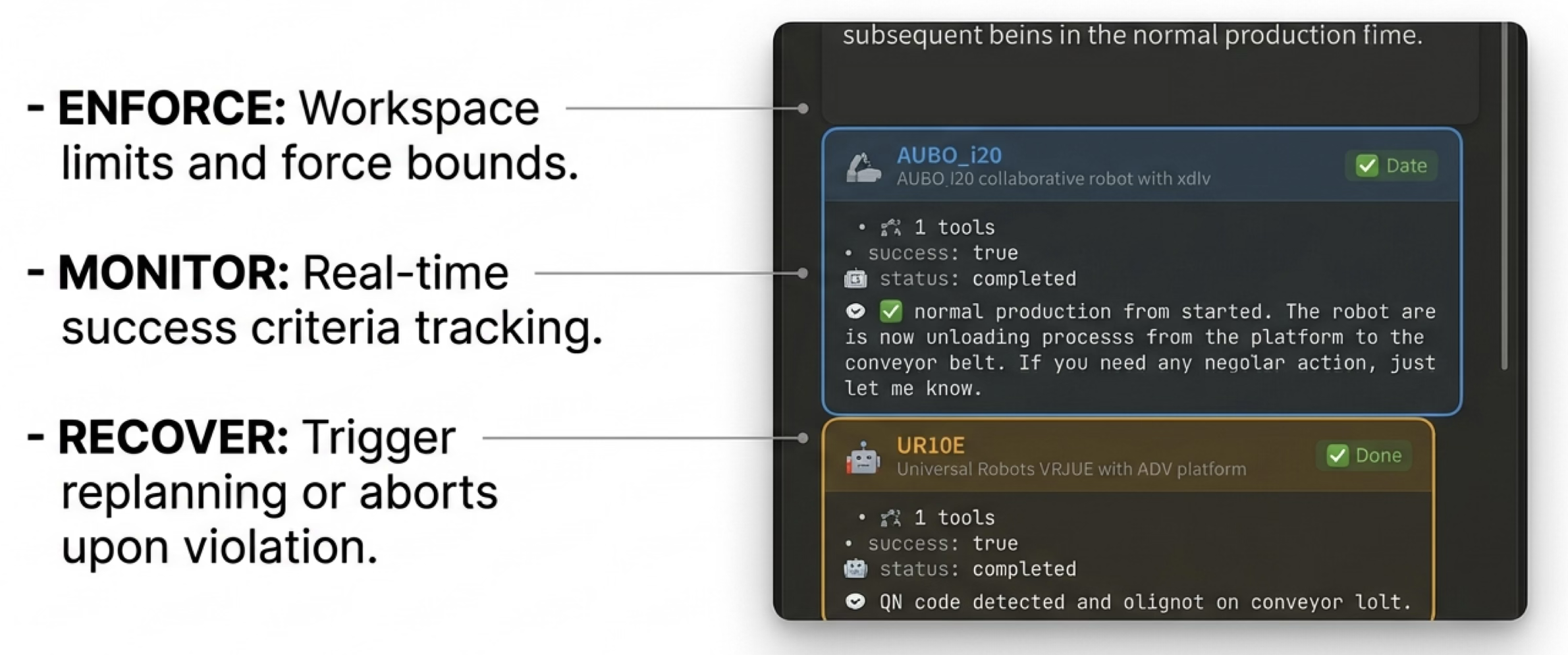

It looks again, adjusts alignment, and updates the next move before a grasp or handoff is accepted.

A run is successful only when postconditions pass, which keeps execution supervised and easy to review.

The demo starts with a task instruction, then shows how it is planned, carried out, and checked step by step.

Natural-language request → step-by-step executionTool calls, agents, recoveries, and timing stay visible during the job instead of only showing up afterward.

See who acted, when they acted, and whyThe same trace that supervises the runtime also captures the records needed to improve models over time.

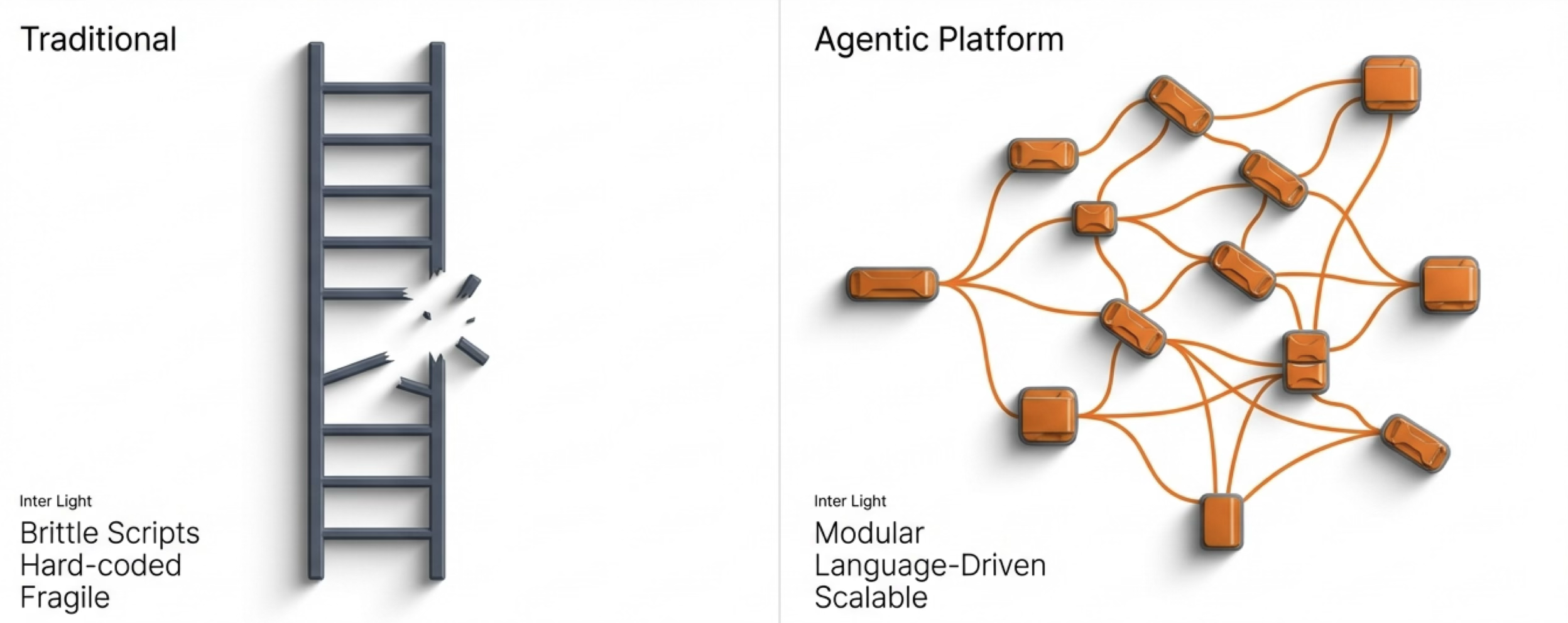

Plans, actions, video, outcomes, and interventions stay linkedHard-coded sequences fail when the scene shifts, tolerances drift, or handoff conditions change. Our approach breaks work into readable steps, keeps the scene in context, and recovers instead of failing silently.

It becomes useful when it can act in the world and improve from data gathered through physical interaction. Embodied Agentic Runtime is built for the first two steps: deploy in the real world, then collect interaction data at the scale needed to make the next version better.

The runtime brings intelligence into physical environments with explicit plans, verification, and human oversight.

Tasks, perception, actions, outcomes, recoveries, and operator interventions are captured at scale to support better models and more autonomy.

Agents, workflow graphs, digital twin state, process monitoring, and live video all sit in the same interface.

Different hardware and support services expose clear capabilities without splitting the orchestration layer apart.

The graph shows ordering, live state, and recovery points instead of hiding execution inside opaque controllers.

Supervisor controls keep intervention close to the running job instead of burying it in a separate panel.

Each step is grounded in both the digital twin and the camera feed, so the run stays easy to follow.

Instead of locking every action into a fixed script, the runtime turns execution into a structured process that can be inspected, adjusted, paused, resumed, or overridden. That is what makes human-in-the-loop control, recovery, and coordination across specialized agents practical.

Multi-agent coordination, step-by-step execution, shared world state, typed tools, and supervision separate decision-making from action execution, so the system stays legible and open to intervention while it runs.

Hardware is exposed as typed tools with schemas, constraints, and capabilities, so backends can change without rewriting agent logic.

Agents query poses, plan motion, and verify placements against a shared scene representation that stays live during execution.

The system runs in readable steps with closed-loop checks and recovery instead of fire-and-forget playback.

Force and speed limits, postcondition checks, retries, and human override paths keep execution supervised and repeatable.

Specialized roles collaborate through structured tool calls instead of being forced into a single controller.

Skills are defined with clear specs, checks, and fallbacks, and the runtime pairs those definitions with a trace that shows which agent did what, when, and why.

STEP 1 Parse task planner 0.0s STEP 2 Query world state supervisor 1.3s STEP 3 Navigate to shelf nav 9.8s STEP 4 Detect cassette perception 13.7s STEP 5 Pick + force check manipulation 21.4s STEP 6 Inspect seal region perception 29.6s STEP 7 Regrasp for alignment manip + planner 38.9s STEP 8 Deliver to conveyor nav + manipulation51.0s STEP 9 Verify postcondition supervisor 58.4s

Recover grasp quality when the initial pickup is unstable or offset.

name: pick-with-alignment agents: [perception, manipulation, supervisor] stages: - detect_candidates(top_k=5) - score_grasps(force_limit_n=18) - pick(best) - regrasp(if tilt_deg > 7) fallback: "request_new_view"

Route the object to rework or quarantine based on visual QA and tolerance checks.

name: visual-qa-gate tools: [rgbd_detector, visual_qa, reject_gate] checks: seal_gap_mm: 0.8 label_present: true surface_glare: "auto_compensate" on_fail: "quarantine_bin"

Synchronize mobile base timing with a downstream handoff target.

name: conveyor-intercept agents: [nav, supervisor] params: target_lane: 3 eta_slack_s: 2.5 geofence: "conveyor_zone" retry: "replan_if_lane_blocked"

Coordinate arm, AGV, and supervisor checkpoints for a reliable handoff.

name: multi-robot-transfer sequence: - approach(sync_pose=true) - micro_align(vision=true) - transfer(force_limit_n=12) - verify_release() abort_if: "postcondition_timeout"

For robotics teams moving from demos to production, integrators coordinating mixed hardware, and embodied AI teams that need real-world data at scale. The runtime brings robots into real environments, verifies each interaction, and turns every run into data the next version can learn from.

goal: "Run a real task in a production cell" runtime: EmbodiedAgenticRuntime loop: 1. plan 2. act 3. verify 4. log result: verified execution on the floor

captures: - task request + structured plan - tool calls, params, constraints, results - world-state snapshots + scene graph - synchronized video streams - success / fail / retry outcomes - supervisor decisions + interventions

while deployments grow: collect_real_world_interactions() build_better_datasets() train_better_models() expand_autonomy() # More tasks → more real-world interaction # → better models → more autonomy